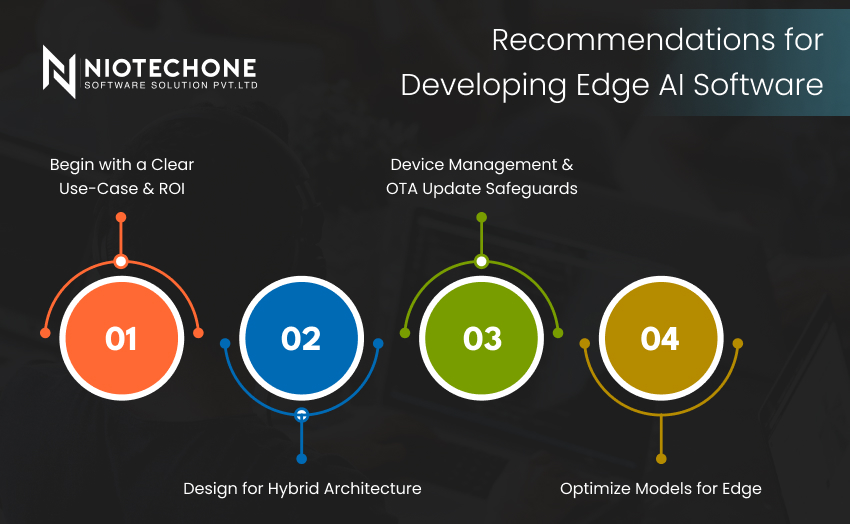

For organizations doing complex software development, .NET Core application development, enterprise mobility solutions, etc., there are a few best practices to help make the implementation of Edge AIs an easier and more positive experience.

Begin with a Clear Use-Case & ROI

Not every problem requires to be solved with edge inference. Analyze latency, privacy, connectivity, cost, etc. to determine if the benefits justify building Edge AI.

Optimize Models for Edge

To support edge inference, use techniques like quantization, pruning and lightweight architectures. Also use frameworks that allow built-in support for edge inference (e.g., ML.NET, ONNX, TensorFlow Lite), so integrations with your .NET or mobile stack are seamless.

Design for Hybrid Architecture

Make sure that cloud + edge will complement each other. The cloud should be used centrally to train and manage models, to perform analytics, and to store a copy of perfect backup models; while the edge will carry out inference and perform required immediate actions.

Device Management & OTA Update Safeguards

The edge device will also need over-the-air update safeguards in place to allow for model and firmware updates. Anytime you update the model or firmware, you must update it securely and completely. It’s always a best practice to leverage versioning and rollback options as well.